Software-Defined Access

By now I’m sure you have heard the new product announcements from Cisco around Software Defined Access (SD-Access). This announcement is a departure from the typical “new box” announcements that we are familiar with from the networking behemoth. Yes, there are new boxes, but this announcement is much more than that - it is the beginning of a new era for networking. Cisco marketing will appreciate me clarifying, that SD-Access is a part of The Network. [pause for dramatic effect] Intuitive. Now, I’ll be the first one to cringe or poke fun at marketing terminology used in IT industry, and this one would seem certainly ripe for the poking. However, based on what I have seen thus far, there is nothing to cringe at here.

The Problems It Solves

As amazing as new technology is, it is only valuable to your organization if it solves business IT problems. The SD-Access solution is intended to solve the following business IT challenges.

- Host Mobility (without stretching VLANs)

- Network Segmentation (without implementing MPLS)

- Role-based Access Control (without end-to-end TrustSec)

- Common Policy for Wired and Wireless (without using multiple tools)

- Consistency Across Campus, WAN, and Branch (without using multiple tools)

Overview

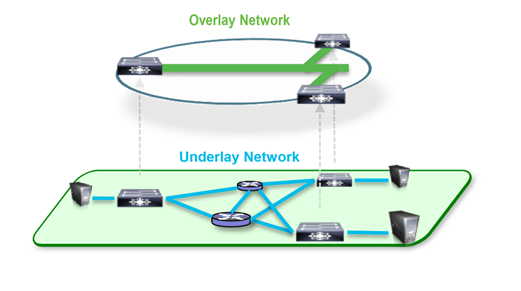

SD-Access is an evolution of a solution Cisco has been shipping for about a year now called Campus Fabric. The Campus Fabric solution is a virtual overlay network built on the top of the traditional campus network. The point to grab here is that the traditional network does not go away in the SD-Access solution, on the contrary, it is ever more important and takes the role of the “underlay network”.

DNA Center

What makes the SD-Access solution unique when compared to the Campus Fabric solution lies in how it is provisioned and operated. Provisioning the Campus Fabric involved manual and complex CLI configurations on a per device basis in order to setup the fabric. Operating the Campus Fabric was done using a combination of tools and a myriad of “single panes of glass”. SD-Access uses a software defined networking approach that employs a central “controller” for provisioning and operation. This brings us to Cisco DNA Center. DNA Center is the Software Defined Network Controller for the SD-Access solution

DNA Center is fundamentally a new graphical user interface for the existing APIC-EM solution (Although, I’m sure this is an oversimplification). It integrates tightly with Cisco Identity Services Engine (ISE) and Cisco Network Data Platform (NDP). ISE and NDP deserve their own blog post, so they’ll get only a brief mention here. ISE provides user information and network access security services to the SD-Access solution. NDP provides analytics and assurance services to the SD-Access solution. Both of which are consumed by the installer/operator through the DNA Center UI. To me, DNA Center is the most exciting part of the SD-Access announcement. I anticipate SD-Access is just the first solution out of many to come which will leverage the same DNA Center for automation. Let’s just say that it would make a whole lot of sense to have DNA Center automate the SD-WAN solution that ties together disparate SD-Access fabrics. And Cisco has a few of those (IWAN, Viptela, and Meraki)…

Of course, this is only speculation.

Campus Fabric

Now that we know SD-Access solution is an automated version of the Campus Fabric solution (thanks to DNA Center!), let’s take a closer look at the components that comprise the Campus Fabric. The below diagram depicts all of the components of the SD-Access solution (which includes the Campus Fabric).

Campus Fabric - Control Plane

The Campus Fabric has two control planes; the overlay network control plane and the underlay network control plane.

Underlay Network Control Plane

The underlay control plane uses the same technologies that we design our LAN networks with today, which is what makes migration to the Campus Fabric straight-forward as we are simply adding an overlay to it. In a greenfield deployment of Campus Fabric, the underlay network would follow an updated best practice and be almost entirely comprised of redundant routed networks (there would be very little if any Layer 2 connectivity). The underlay control planes primary purpose is to provide connectivity between the overlay networks Routing Locators discussed in the next paragraph.

Overlay Network Control Plane

The overlay control plane is based on an industry standard protocol: Locator Identity Separator Protocol (LISP). Traditionally, a computer’s IP address tells us both who the computer is on the network (identity) AND where to reach it (location). This “overloads” the meaning of the IP address and has the unfortunate constraint of making IP addresses valid only if they tie back to a particular location in the network. In LISP, a computer’s IP address tells us who the computer is on the network (identity), but it uses another mechanism to find out where a computer is on the network (location). LISP uses the concept of a Routing Locator (RLOC) to represent the location for a given computer on the network. In traditional networks this would equate to host based routing. Imagine a network where every single host has an entry in the routing table. That would be way too much demand on routing table memory and CPU (network wide re-convergence would happen every time a laptop went powered on or off!!). This is, in fact, one of the reasons why a computers IP address was designed to inform us of both the identity and location of a computer on the network. We could represent all the computer IP addresses from a particular subnet behind a single entry in the routing table (to save memory) and re-convergence would only occur if the subnet router went down/up (to save CPU). LISP resolves the host routing scalability issue by introducing a computer IP address (identity) to computer RLOC (location) mapping system that behaves extremely similar to the way DNS works (as shown below).

In the Campus Fabric, the Control-Plane Node is the LISP mapping system (and yes, you can have two of them for redundancy!). See the infographic below for additional details on the Control-Plane Node.

Campus Fabric - Data Plane

Although LISP tunnel could be used for data plane traffic, it only supports tunneling Layer 3 traffic. Since the Campus Fabric is emulates a traditional Layer 2 network, it needs to support tunneling Layer 2 traffic. Thus, the Campus Fabric data plane is based on another industry standard protocol that does just that: Virtual Extensible LAN (VXLAN).

When a Campus Fabric Edge/Border node needs to forward a packet to a computer IP address (identity), it sends a resolution request to the Control-Plane node to find out what the RLOC (location) is for the computer (it can then cache this mapping for future use). The Edge/Border node then uses the RLOC as the destination IP of the VXLAN encapsulation to forward along the original packet.

Campus Fabric - Policy Plane

The Campus Fabric policy plane is made up of two components; Virtual Routing and Forwarding (VRF) and Scalable Group Tags (SGT). In the Campus Fabric, a Virtual Network is analogous to a VRF. The VRF provides macro-segmentation (endpoints cannot speak to one-another because they are on completely different virtual networks that have no router between them). The SGT provides micro-segmentation (endpoints are on the same virtual network but whether or not they can speak to one another is determined by the policy applied between their respective group tags). In the Campus Fabric solution, you can employ macro-segmentation (VRF’s), micro-segmentation (SGT’s), or both! The VRF and SGT information reside inside of the VXLAN header (as shown below).

Key Takeaways

This is only the beginning of the next era of networking. The SD-Access solution is a major evolutionary step in simplifying the complexity in our networks while retaining the ability to provide flexible, highly sophisticated network solutions to our organizations.

![]()

SD-Access is a new technology, albeit built upon tried and proven network protocols. As with any new technology, I would advise my clients to exercise a certain amount of caution before a full production deployment. In the meantime, between now and that full production deployment, take some time to strategize on your journey. Ask yourself if your network hardware/software is ready to support the necessary protocols/encapsulations (e.g. LISP, VXLAN, etc.). If not, map out a plan to get there. Plan a proof of concept in your lab or a small office. Familiarize yourself with the protocols and skillsets necessary with this new era of networking. Thanks for reading!

Written By: Dominic Zeni, LookingPoint Consulting Services SME - CCIE #26686

Sources: CiscoLive - (Must Have a Cisco CCO Account to Login)